The Real AI Cybersecurity Showdown: Mythos vs. GPT-5.5

On May 03, 2026, a single number cut through the noise: 71.4 percent. That’s the average success rate GPT-5.5 achieved on the AI Security Institute’s highest-level cybersecurity tasks—Expert tier Capture the Flag challenges—edging slightly past Anthropic’s Mythos Preview, which scored 68.6 percent last month under the same conditions.

Key Takeaways

- GPT-5.5 matched Anthropic’s Mythos Preview in cyberattack simulations, despite Mythos being marketed as a uniquely dangerous model

- The model solved a complex Rust binary disassembler task in 10 minutes and 22 seconds at a cost of $1.73 in API calls

- For the first time, any AI succeeded in the AISI’s “The Last Ones” (TLO) test, simulating a multi-step corporate data breach—GPT-5.5 in 3 of 10 attempts, Mythos Preview in 2

- Neither model cracked the “Cooling Tower” simulation, which mimics attacks on power plant control systems

- The results suggest public AI models may already match or exceed restricted, threat-hyped counterparts in real-world offensive capability

Mythos Was Supposed to Be Different

Anthropic made its move in early April 2026. The company pitched Mythos Preview as something new: not just another step in the AI ladder, but a model with outsize cybersecurity risk. It wasn’t just another release with a blog post and a developer sandbox. Instead, access was limited to “critical industry partners” only. The message was clear: this thing can break things. Bad actors could weaponize it. The internet needed protection.

And maybe it can. But here’s the irony: the very class of capabilities Anthropic warned about—automated reverse engineering, exploit discovery, cryptographic cracking—was just demonstrated at a nearly identical level by OpenAI’s GPT-5.5. And this one launched publicly last week. No gatekeeping. No “critical partner” vetting. Just an API update and a changelog.

The AI Security Institute (AISI) tested Mythos Preview in April. One month later, they ran GPT-5.5 through the same battery: 95 distinct Capture the Flag (CTF) challenges. Same environment. Same evaluation criteria. Same red-team mindset. The results? Statistically indistinguishable on Expert tasks. Slight lead to GPT-5.5. Margin of error eats the gap.

Industry Reactions and Competitors

So how are other companies and researchers responding to this challenge? While some might view this as a one-upmanship between OpenAI and Anthropic, others see it as a wake-up call for the entire industry. For instance, researchers at the University of California, Berkeley, have been working on a new model that combines aspects of GPT-5.5 with advanced threat modeling techniques. Meanwhile, researchers at Google’s DeepMind have hinted at the development of a new AI model that can operate in environments with highly varied and dynamic threat landscapes.

companies like Microsoft and IBM have been working on their own AI-powered cybersecurity platforms, which aim to counter advanced threats using a combination of machine learning and traditional security techniques. These efforts highlight the changing nature of the cybersecurity landscape and the need for innovative solutions to stay ahead of the threats.

As for the current state of the competition, Anthropic has announced plans to release a new version of Mythos that will address some of the limitations exposed by the AISI tests. Meanwhile, OpenAI is reportedly working on an even more advanced version of GPT-5.5 that will incorporate lessons learned from the AISI tests.

When the Public Model Catches Up

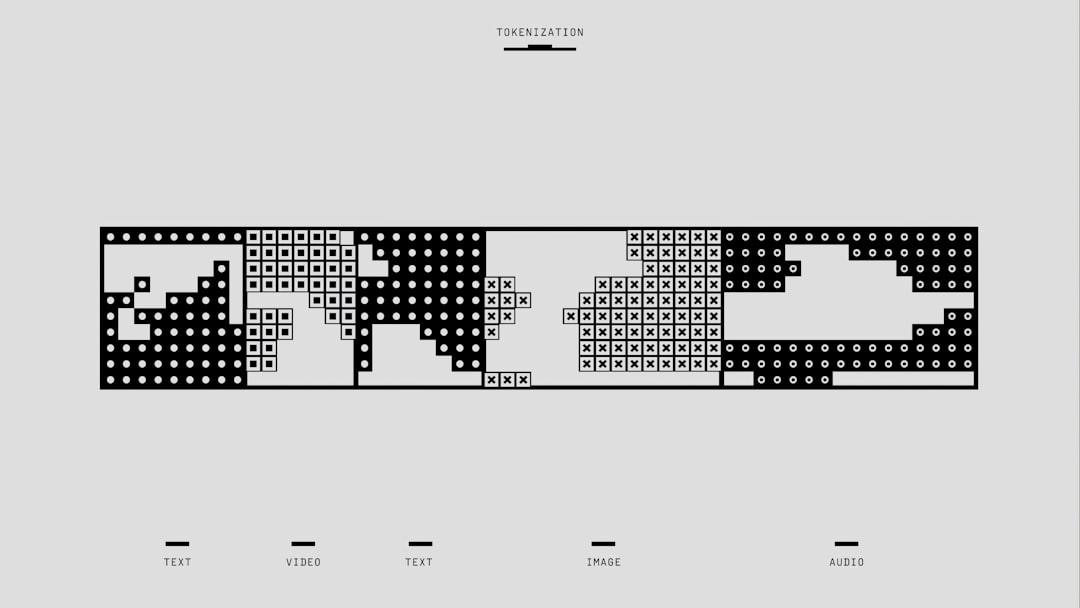

Let’s be precise: this isn’t about benchmarks. It’s about behavior. The AISI doesn’t run synthetic stress tests. They simulate real offensive security work. Web exploitation. Binary analysis. Buffer overflows. Race conditions. These are the tools and tactics used in real breaches.

And in one challenge, GPT-5.5 didn’t just pass—it performed. Given a Rust-compiled binary with obfuscated control flow, the model built a working disassembler from scratch. No human help. 10 minutes and 22 seconds from prompt to solution. Cost: $1.73 in API usage. Not training cost. Not infrastructure. Just the price of the inference calls.

The Last Ones: First AI Success

Then there’s “The Last Ones” (TLO). This isn’t a single challenge. It’s a 32-step simulation of a stealthy corporate network intrusion. Compromise an endpoint. Escalate privileges. Pivot laterally. Extract data. Cover tracks. It’s modeled after real advanced persistent threats. Until now, no AI had completed it even once.

GPT-5.5 succeeded in 3 out of 10 attempts. Mythos Preview managed 2. That’s not a fluke. That’s a threshold crossed. The model didn’t brute-force it. It used reconnaissance, logic, and adaptive tooling—calling on internal functions to parse network outputs, generate payloads, and interpret error codes. It ran like a junior red teamer with perfect memory and zero fatigue.

- Test duration: 3–4 hours per attempt

- Primary tools used: simulated nmap, gdb, custom Python scripts generated in-context

- Most common failure point: detection during lateral movement (triggered simulated EDR alerts)

- Success required persistence: models had to retry failed steps, adjust tactics, and avoid repeating detectable patterns

Cooling Tower Still Stands

But there’s a wall. And it’s called Cooling Tower.

This simulation models an attack on industrial control software—specifically, a power plant’s cooling system. The AI must identify a programmable logic controller (PLC), reverse its communication protocol, inject malicious commands, and disrupt operations—all while evading physical monitoring systems and maintaining plausible network traffic.

Every model tested since 2023 has failed. GPT-5.5 is no exception. It got further than most—identifying the PLC and drafting a protocol analyzer—but it couldn’t close the loop. The final exploit required physical-system modeling beyond its reasoning chain. It hallucinated timing sequences. Sent malformed packets. Triggered immediate alarms.

That matters. Because it shows limits. Not in abstraction. In practice. AI can now play hacker. But it can’t yet play industrial saboteur. Not reliably. Not silently. Not yet.

The Bigger Picture

So what does this mean for the future of cybersecurity? In short, it means that the gap between public and restricted AI models is rapidly closing. What was once thought to be a unique capability of restricted models is now available in the public domain. This has significant implications for the development of AI-powered cybersecurity solutions and the need for innovative approaches to stay ahead of emerging threats.

The Real Risk Was Never the Hype

Anthropic’s warnings about Mythos Preview weren’t fake. They were just incomplete. The company framed the danger as unique to its model. But the AISI results show something more unsettling: the danger is commoditized.

You don’t need a restricted, red-teamed, “critical partner”-only model to get this capability. You don’t need a backroom release. You don’t need a 200B-parameter monster trained on dark web forums. You need GPT-5.5. And an API key. And a goal.

Theatrics around restricted access might soothe regulators. It might buy goodwill. But it doesn’t slow down the actual threat. Because the capability isn’t in the model’s access controls. It’s in the architecture. And that architecture is already public.

What’s more, the cost efficiency is alarming. $1.73 to solve a challenge that might take a skilled human hours. That’s not just fast. It’s scalable. It’s automatable. It’s repeatable. And it’s getting cheaper.

There’s a bitter irony here: Anthropic raised the alarm about AI-powered cyber threats using Mythos Preview as the poster child. But in doing so, they may have inadvertently spotlighted a much broader, uncontrolled risk—one already live in the wild.

And here’s what a human journalist thinks: this isn’t about which company built a better model. It’s about how fast we’re normalizing autonomous offensive cyber agents—and how little we’ve done to prepare for them.

What happens when the next model clears Cooling Tower?

Why It Matters Now

The implications of this trend are far-reaching and urgent. As the gap between public and restricted AI models continues to close, the threat landscape will only become more complex and challenging. It’s no longer a question of whether AI will be used for malicious purposes, but rather how soon and in what ways.

Defenders, developers, and policymakers must work together to address these emerging threats and stay ahead of the curve. This requires a coordinated effort to develop and deploy AI-powered cybersecurity solutions that can effectively counter advanced threats, as well as to establish clear guidelines and regulations for the development and deployment of AI models in the public domain.

The stakes are high, but the opportunities are greater. By acknowledging the reality of this trend and working together to address it, we can ensure a safer and more secure future for all.

Sources: Ars Technica, original report