0.8 — that’s the approximate maturity score assigned informally by internal assessors across the large financial institutions reviewed by Australia’s prudential watchdog in late 2025. Not 80%, not a letter grade, but a decimal, whispered in hushed tones during debriefs: a system-wide average that captures just how underdeveloped AI risk controls are, even as AI agents now handle loan applications, customer interactions, and code generation across Australia’s biggest banks and superannuation funds.

Key Takeaways

- APRA found all major financial institutions it reviewed are using AI, but risk management maturity varies widely — with most still building foundational controls.

- Boards show strong interest in AI’s productivity benefits but often rely on vendor summaries, lacking deep understanding of model behavior and failure modes.

- Critical gaps exist in monitoring, change management, decommissioning, and ownership — with many AI tools lacking named human owners or inventories.

- Cybersecurity risks are escalating: AI introduces new attack vectors like prompt injection, insecure integrations, and unmanaged non-human identities.

- Some institutions face single-vendor dependency with no exit strategy — a dangerous blind spot as AI becomes embedded in core systems.

AI Is Everywhere — But No One’s Really In Charge

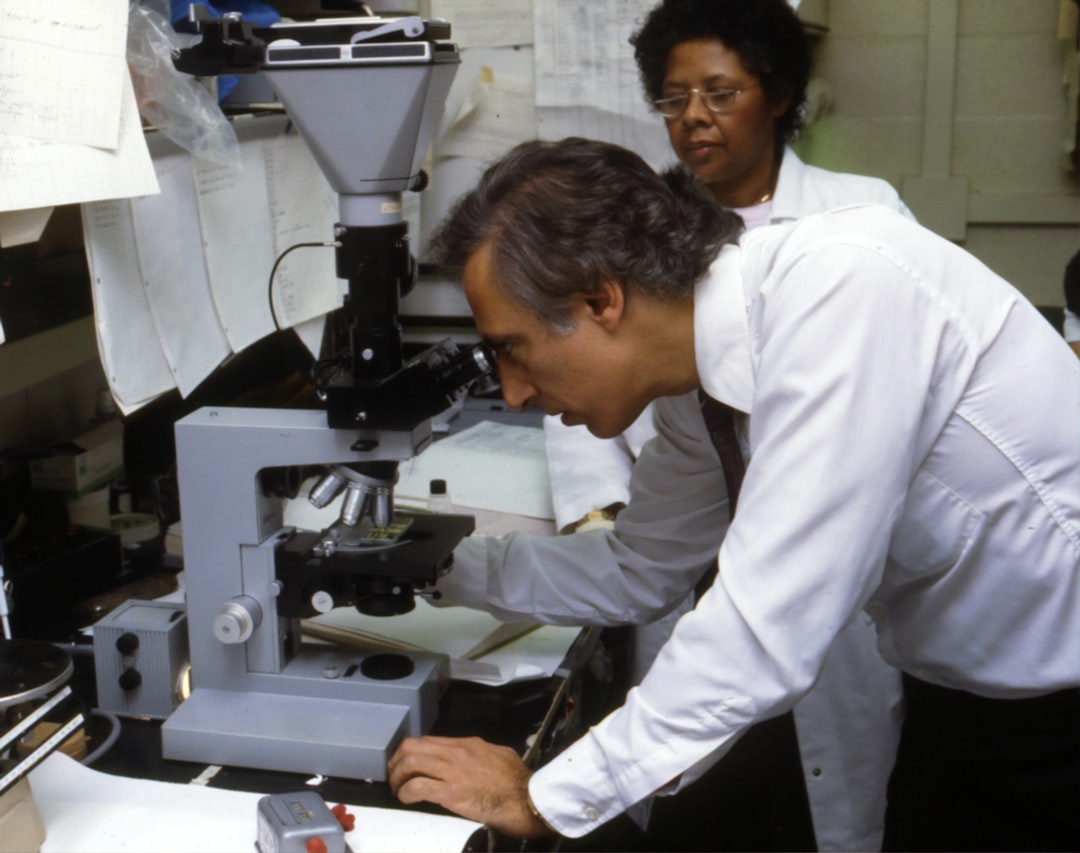

The Australian Prudential Regulation Authority (APRA) didn’t set out to shock. Its late-2025 review of AI adoption across major financial entities was meant to be a baseline check-in. What it found was something closer to crisis infrastructure: AI agents woven into software engineering pipelines, claims triage systems, and customer service bots — yet with no consistent inventory of where these tools were deployed, who owned them, or how they made decisions.

At one institution, developers were using AI to generate 60% of new application code — but the security team had no visibility into the models generating it. At another, AI was auto-approving low-risk loan applications, but the board had never seen a failure scenario analysis. The pattern wasn’t isolated. It was systemic.

APRA didn’t name names, but its findings paint a picture of institutions racing to deploy AI while treating governance as an afterthought. Boards were enthusiastic about AI’s potential for efficiency and customer experience — but their oversight was shallow. Many were relying on vendor presentations and executive summaries rather than probing the mechanics of model drift, bias, or error cascades.

That’s not oversight. That’s outsourcing.

Boards Don’t Understand the Machines They’re Betting On

Here’s the uncomfortable truth APRA surfaced: financial leaders are making strategic decisions about AI without understanding what they’re actually deploying. They know AI can speed up loan processing. They know it can reduce call center costs. But they don’t know how a model might flip from helpful assistant to toxic bot, or how a minor prompt injection could cascade into a system-wide failure.

And that’s where the risk explodes.

The regulator explicitly called out the gap between board-level enthusiasm and operational reality. Boards need to move beyond high-level briefings and develop a working understanding of AI — not to become data scientists, but to ask the right questions. What happens when the model fails? Who is responsible? How do we detect bias in real time? What’s the fallback?

Too often, the answer was: we don’t know.

Some institutions were treating AI risks like any other IT project — applying the same controls used for database upgrades or network patches. But AI isn’t just another tool. It’s dynamic. It learns. It adapts. It hallucinates. It can be manipulated. And when it’s embedded in critical operations, a failure isn’t a downtime alert — it’s a regulatory event.

Where the Control Breaks Down

APRA identified three core failure points in AI governance:

- Model behavior monitoring: Few institutions had real-time tracking of AI decision patterns, drift, or anomalous outputs.

- Change and decommissioning: There were no standardized procedures for updating or retiring AI models — meaning outdated or compromised agents could linger undetected.

- Ownership and inventory: Many AI tools were deployed without a named owner or inclusion in asset registers — a governance black hole.

This isn’t about being overcautious. It’s about basic operational hygiene. You can’t secure what you can’t see. You can’t fix what you can’t trace. And you can’t explain what you didn’t document.

And yet, in 2026, some of Australia’s most critical financial infrastructure runs on AI systems that no one can fully account for.

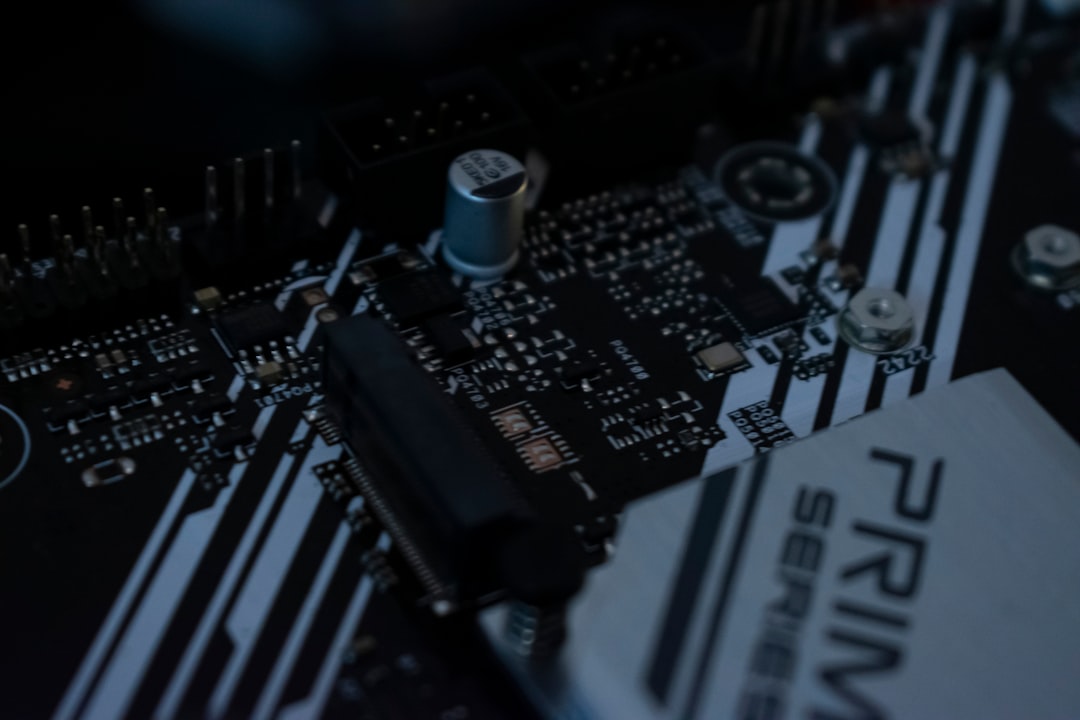

The Cybersecurity Blind Spot: AI as Attack Surface

AI isn’t just a productivity tool — it’s a new attack vector. APRA flagged a disturbing trend: institutions adopting AI without updating their cybersecurity frameworks to account for non-human actors.

AI agents now have access keys, API permissions, and even privileged system rights — but identity and access management (IAM) policies haven’t caught up. Many IAM systems still assume every actor is a human with a password and MFA. They don’t account for bots that authenticate via tokens, operate 24/7, and can be hijacked through poisoned prompts.

Prompt injection attacks, for example, can trick AI agents into leaking data, executing unauthorized commands, or bypassing security controls. And because these agents are often integrated into backend systems, a single compromised model can become a pivot point for lateral movement.

APRA called for privileged access management for agentic workflows, just like you’d have for a sysadmin. That means just-in-time access, session logging, and least-privilege permissions. It also means security testing of AI-generated code — not just the final application, but the intermediate steps where vulnerabilities can be silently introduced.

One bank reported that 40% of its new code in 2025 was AI-generated. But less than 10% of that code had undergone manual security review. The rest relied on automated scanners that weren’t designed for AI artifacts.

That’s not a pipeline. That’s a liability conveyor belt.

The Vendor Lock-In Trap

Another red flag: dependency on single AI vendors. APRA found that some institutions had built multiple AI applications on one provider’s platform — customer service bots, underwriting models, even internal knowledge assistants — with no plan to switch if that provider failed, changed pricing, or got acquired.

Only a few could demonstrate a substitution strategy. None had fully tested an exit.

And it gets worse. AI isn’t just in the apps you build — it’s in the dependencies. A third-party library might use AI under the hood to optimize queries or generate responses, and you’d never know. APRA warned that entities may not even be aware of how deeply AI is embedded in their supply chain.

This isn’t theoretical. In early 2026, a major cloud provider had to patch a widely used NLP library after researchers discovered it was secretly calling an external AI service — exfiltrating user data in the process. The breach wasn’t in the app. It was in the dependency.

Human Judgment Can’t Be Automated

Amid all the talk of automation, APRA made one thing clear: **high-risk decisions must include human involvement**. That means loan denials, fraud flags, and customer account closures shouldn’t be left to AI alone.

Not because AI isn’t capable. But because accountability can’t be outsourced.

When an AI denies a pensioner’s claim, someone has to answer for it. When a model flags a transaction as fraudulent, someone has to review the context. And when things go wrong — and they will — there has to be a human chain of responsibility.

That’s not a limitation of technology. That’s a requirement of trust.

And yet, in the rush to cut costs and scale operations, some institutions were pushing AI into domains where human oversight was minimal or symbolic. A checkbox, not a checkpoint.

APRA’s message was unambiguous: if you can’t explain the decision, you shouldn’t be making it.

What This Means For You

If you’re a developer or builder working on AI systems — especially in regulated environments — this report is a wake-up call. Governance isn’t someone else’s problem. It’s yours.

Start by mapping every AI instance you deploy. Assign a real person as owner. Log every change. Test every output. Assume your model will be attacked — not through the UI, but through the prompt, the API, the dependency chain.

And stop treating AI as just another feature. It’s a new class of system with new failure modes. Your CI/CD pipeline needs AI-specific gates: bias checks, adversarial testing, access reviews. Your incident response plan needs an AI failure playbook. And your code reviews must include AI-generated artifacts — because that code is yours, even if the model wrote it.

For founders and tech leads: if you’re building AI tools for financial services, don’t assume your customers have their house in order. They don’t. Your product needs built-in audit trails, model provenance, and escape hatches. Make it easy to monitor, easy to replace, easy to explain.

The era of unaccountable AI in finance is over. APRA just drew the line.

The real question isn’t whether banks will fix their AI governance. It’s whether they’ll do it before the next failure makes headlines. Because on May 06, 2026, we already know how fragile the system is.

Sources: AI News, original report