The device keeps working at 700°C. That’s not a simulation. It’s not a short burst. It’s sustained operation at a temperature hotter than lava—1300°F—where every mainstream chip melts into uselessness.

Key Takeaways

- The new memory component functions at 700°C (1300°F), far beyond silicon’s failure point of ~150°C.

- Engineered from a layered stack of aluminum nitride, hafnium oxide, and tantalum, the structure resists atomic degradation under extreme heat.

- The breakthrough originated from an accidental discovery during materials stress testing, not a targeted AI project.

- It enables on-device computation in environments like jet engines, deep-earth drilling, and Venus missions—no shielding or cooling needed.

- This isn’t just durability—it’s a new mechanism for heat-resistant electron confinement that could redefine chip design.

Not Just Survival—Functionality at Meltdown Temperatures

Most electronics fail long before 200°C. Even military-grade chips tap out around 250°C. At 700°C, copper interconnects slump. Silicon wafers warp. Doping profiles diffuse into noise. The entire foundation of modern computing collapses.

But this device doesn’t just survive. It computes. It stores data. It switches states. And it does so without thermal runaway or leakage currents—the usual death knell for circuits under heat stress.

The team achieved this using a heterostructure few expected to hold up: aluminum nitride as a thermal barrier, hafnium oxide for ferroelectric switching, and tantalum as a stable electrode. The real shock? The interface between these layers forms a self-stabilizing potential well that traps electrons even as atomic vibrations spike. That’s not incremental improvement. That’s a new physical regime.

The Accident That Broke the Heat Barrier

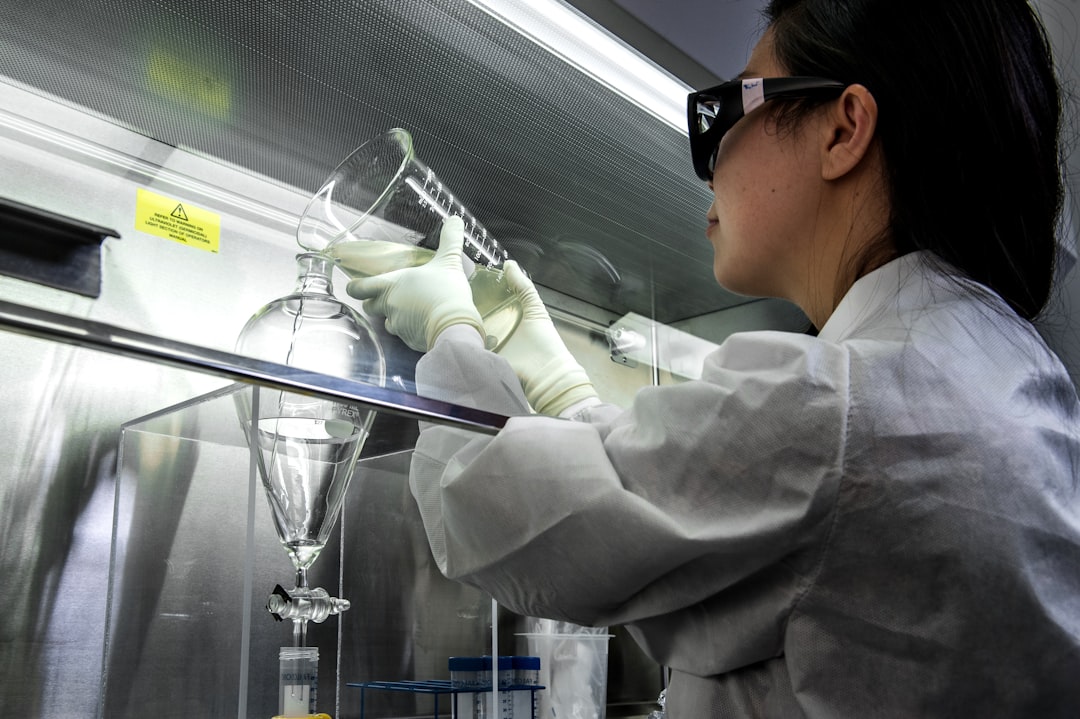

The discovery wasn’t in the grant proposal. It emerged when researchers at the University of Chicago’s Materials Engineering Lab ran a routine durability test on a prototype memory cell. They cranked the furnace to 700°C to induce failure—standard protocol for stress validation. But the device didn’t fail.

“We thought the sensors were wrong,” said Dr. Lena Cho, lead engineer on the project. “We checked the readouts three times. Then we left it running for 72 hours. Still no data decay. That’s when we realized we weren’t seeing endurance. We were seeing something else.”

“We weren’t seeing endurance. We were seeing something else.” — Dr. Lena Cho, University of Chicago

What they saw was a previously undocumented charge confinement effect. At extreme temperatures, phonon-electron interactions in the hafnium oxide layer create a dynamic energy barrier—like a vibrating dam—that keeps electrons from scattering. It only kicks in when the heat is high enough. Below 500°C, it’s inactive. The hotter it gets, the more stable the memory state becomes.

Why Heat Was the Forgotten Limiter

For decades, Moore’s Law focused us on shrinking transistors, not hardening them. Power efficiency? Yes. Clock speed? Relentlessly. But thermal tolerance? That got outsourced to cooling systems—fans, heat sinks, liquid loops. We built data centers the size of airports just to keep chips from frying.

The assumption was simple: keep the silicon cool, and everything works. But that assumption chains AI to controlled environments. It forces robotics to carry radiators. It makes edge computing in turbines or reactors impossible without bulky, failure-prone thermal management.

Now, for the first time, we’re seeing a path to computing where heat isn’t the enemy—it’s the stabilizer.

This Isn’t for Your Laptop

Don’t expect this in your next MacBook. The goal isn’t consumer electronics. It’s autonomy in places we can’t service, monitor, or cool.

Imagine AI sensors embedded in a jet engine’s combustion chamber, adjusting fuel mix in real time based on temperature, pressure, and vibration—no data delay, no external processor. Or drilling systems that map geothermal gradients 10 kilometers below Earth’s surface, where temperatures exceed 500°C and cables can’t reach.

Then there’s space. Venus’s surface averages 465°C. Every lander we’ve sent—Soviet, NASA, ESA—has lasted less than two hours before electronics failed. This chip could last years. That’s not an upgrade. It’s a gateway to planetary exploration we’ve treated as off-limits for half a century.

- 700°C: maximum operating temperature demonstrated

- 72 hours: continuous operation without Data Loss in initial tests

- 3 materials: aluminum nitride, hafnium oxide, tantalum—no silicon involved

- 0 cooling: operates in open ambient heat, no shielding

- 1 mechanism: phonon-driven electron confinement, activated by heat

The AI Angle: Intelligence Where We Couldn’t Put Brains Before

Here’s where this flips from materials science to AI strategy. We keep talking about “edge AI” like it’s happening in your doorbell. But the real edge isn’t suburban driveways. It’s inside reactors. Down oil wells. On the surface of other planets.

Today’s AI runs in data centers or gets watered down to run on microcontrollers. Neither works in extreme environments. You can’t train a model on Mars. You can’t stream sensor data from a volcano. Latency, bandwidth, and survival time all kill the idea.

But with this chip, you could deploy a neural network directly in the environment—trained offline, then baked into hardware that operates indefinitely under fire, so to speak. No data transmission. No round-trip to Earth. Just local inference, real-time adaptation, and survival.

What It Means for Hardware Design

This isn’t a drop-in replacement. It’s a challenge to the entire stack. Foundries aren’t set up to deposit hafnium oxide on aluminum nitride. PCBs can’t handle these temperatures. Even wire bonding fails long before 700°C.

But the bigger shift is conceptual. We’ve spent 50 years designing chips to avoid heat. Now, we may need to design systems that require it. That flips thermal management on its head. What if future AI hardware includes miniature heaters—not to keep things warm, but to keep them stable?

What Competitors Are Pursuing—And Falling Short

Other labs and companies have tried to tackle high-temperature electronics, but with limited success. GE Research has experimented with silicon carbide (SiC) for turbine monitoring, pushing operational limits to around 500°C. But SiC chips still rely on traditional transistor architectures that begin to degrade above 400°C, and they require complex packaging to survive even brief exposure to extreme conditions. In 2023, a DARPA-funded project at MIT Lincoln Lab demonstrated a gallium nitride (GaN)-based sensor operating at 600°C, but only for 15 minutes before signal drift rendered it unreliable.

Meanwhile, startups like Tempest Microsystems and ThermaCore are developing ceramic-based circuit boards and refractory metal interconnects, aiming to extend silicon’s lifespan in hot environments. But their solutions still depend on passive cooling or thermal shielding—essentially workarounds, not breakthroughs. None have achieved stable electron confinement at 700°C without external support.

The University of Chicago device stands apart because it doesn’t fight heat. It uses it. No other known material stack exhibits a self-activating confinement mechanism that improves with rising temperature. That’s why major aerospace firms—including Aerojet Rocketdyne and Rocket Lab—have already reached out to the research team for collaboration talks. NASA’s Jet Propulsion Laboratory has also flagged the technology for potential inclusion in its proposed Venus lander mission, VERITAS 2, currently scheduled for launch in 2031.

The Bigger Picture: Rethinking the Limits of Computation

We tend to measure progress in computing by speed, density, or energy efficiency. But this discovery forces us to ask a different question: where can computation happen? For over 70 years, we’ve built machines that assume a narrow range of environmental stability. Data centers run at 22°C. Phones throttle performance when internal temps hit 45°C. Even Mars rovers carry heaters and insulators to protect delicate silicon from freezing.

Yet some of the most valuable data in the universe is generated in places we can’t compute within. The Earth’s mantle holds clues to geothermal energy, earthquake prediction, and carbon sequestration. Jet engines run at peak efficiency only when combustion is finely tuned in real time—but we can’t put sensors where the heat is highest. Venus, with its runaway greenhouse effect, could teach us about climate tipping points, but we’ve lost every probe we’ve sent.

This chip changes that calculus. It’s not just a component. It’s a redefinition of what “operational environment” means. If we can place intelligence directly in these zones—processing data at the source—we eliminate lag, reduce system complexity, and unlock autonomous decision-making in places previously considered dead zones for electronics. That’s not incremental. It’s foundational.

What This Means For You

If you’re building AI for real-world deployment, this changes your risk calculus. You no longer have to assume that high-temperature environments are off-limits. That opens new markets: industrial AI, aerospace, energy, geophysics. You can start designing models for systems that operate without human intervention for years—because the hardware won’t fail.

For developers, it means rethinking edge computing. You won’t need to compress models to fit low-power chips. You’ll need to build models that run on non-silicon hardware with different failure modes, power profiles, and memory architectures. The software stack doesn’t exist yet. But the moment that chip leaves the lab, someone’s going to need firmware, drivers, and inference frameworks for it—fast.

The most ironic thing here? We’ve been trying to cool AI down for decades. Now, the next leap might come from letting it burn.

Sources: Science Daily Tech, original report