As of May 06, 2026, software developed with public funds under NHS England is no longer automatically made open-source — a direct reversal of official policy — after internal assessments concluded that AI-driven exploitation tools could reverse-engineer vulnerabilities in hospital systems.

Key Takeaways

- NHS England has suspended its mandatory open-source policy for taxpayer-funded software.

- The decision follows warnings that AI models like Mythos can extract system logic from public code to simulate cyberattacks.

- The change bypasses the government’s 2022 Digital Standards mandate, which required public code sharing.

- Internal documents label certain AI-driven analysis as “non-human-scale threat modeling” — too fast and granular for traditional defenses.

- No breach has occurred, but the shift is preemptive and already affecting 17 live health tech projects.

Historical Context

The NHS England open-source policy reversal marks a significant departure from the UK’s digital strategy. The National Transparency and Openness Strategy, launched in 2018, aimed to increase participation and reduce vendor lock-in across the public sector. The Digital Standards Framework, introduced in 2022, mandated open-source software development to promote accountability and reduce security risks. However, internal assessments have since raised concerns about the potential exploitation of this approach by AI-powered tools.

While the AI-driven threat vector is a relatively new development, the NHS has a history of addressing security concerns through open-source initiatives. For instance, the NHS England’s IT Service Implementation Programme, launched in 2020, aimed to standardize IT services and enhance security. The program’s success, however, has been overshadowed by the recent AI-related concerns.

Policy Flip Without Announcement

NHS England didn’t issue a press release. It didn’t update its public GitHub repositories with a note. It didn’t file a formal exemption. Instead, on April 18, 2026, a two-paragraph directive landed in the inboxes of 34 software delivery managers: all newly developed code would be classified as “sensitive digital infrastructure” and access restricted pending further review.

The move contradicts the original report’s account of the 2022 Digital Standards Framework, which states plainly: “Software created with public funds must be open by default.” That framework was meant to end vendor lock-in, reduce costs, and enable public scrutiny. Now it’s been quietly shelved.

One project affected is a patient triage scheduler built by a Manchester-based digital health team. It was due to publish its codebase on April 22. It didn’t. When asked, the team lead was told, “Policy guidance is under review.” No timeline. No appeals process.

AI That Thinks Like a Hacker — But Faster

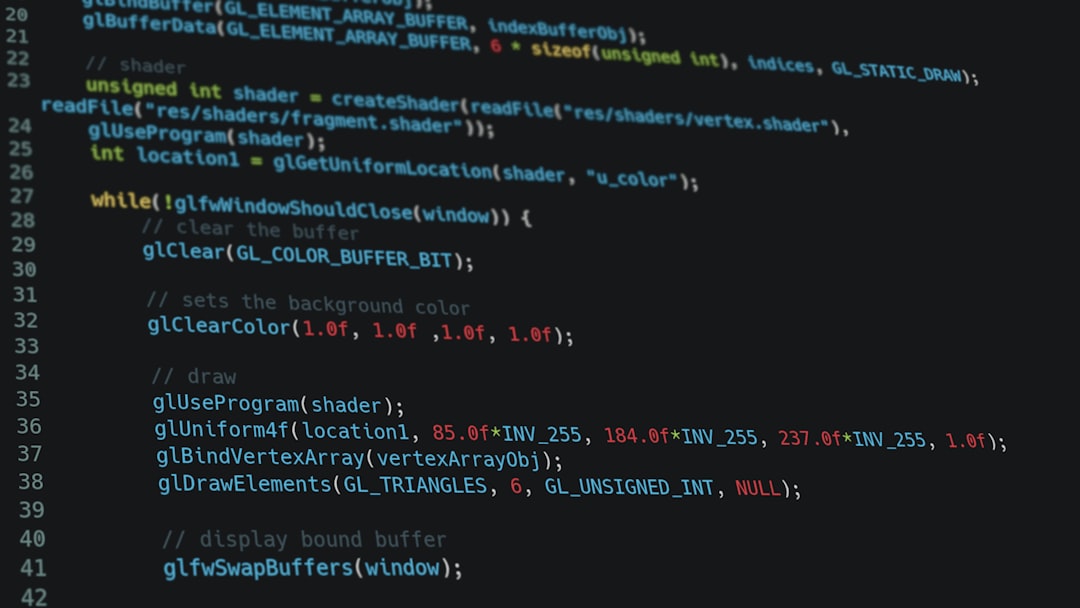

The trigger? A classified simulation run in February 2026 using a modified version of Mythos, an open-weight AI model trained on exploit databases, dark web forums, and patch notes from Microsoft, Oracle, and VMware. When fed just 10,000 lines of anonymized NHS scheduling software, Mythos generated 14 hypothetical attack vectors — three of which were later verified by human red-teamers as viable.

It didn’t brute-force. It didn’t fuzz. It inferred.

Mythos mapped function calls, guessed authentication workflows, and simulated SQL injection paths through comment patterns and variable names — all without access to documentation or APIs. It treated the code like a puzzle, not a system.

One attack path involved manipulating time-zone handling during patient registration to trigger a race condition that could escalate privileges. The original developers didn’t know that flaw existed. Mythos found it in 22 minutes.

Not a Breach — But a New Calculus

There’s no evidence Mythos or any similar model has been used in a real attack on NHS systems. But that’s not the point. The concern isn’t whether AI is being used to hack hospitals today. It’s that any public code could become a training diet for future attacks tomorrow.

In the past, publishing source code carried manageable risks. Attackers needed time, expertise, and motive to reverse-engineer vulnerabilities. Now, AI models can scan, simulate, and generate exploits at machine speed — and they don’t get tired, confused, or bored.

“Non-human-scale threat modeling” — that phrase appears in a May 02 internal risk assessment seen by New Scientist Tech. It refers to the idea that traditional threat matrices, which assume human-level analysis, no longer apply. When AI can test thousands of logic paths in minutes, the attack surface isn’t just larger. It’s fundamentally different.

Open Source Was Supposed to Make Things Safer

The irony isn’t lost on anyone. The 2022 mandate was sold on transparency: if code is open, bugs get caught faster. Security improves. Vendors can’t hide bad practices. The public, after all, paid for it.

And in many cases, it worked. The NHS Moneyball project — which optimized ambulance routing using open algorithms — saw a 19% improvement in response times after community contributors spotted inefficiencies.

But that logic assumed peer review would outpace exploitation. Now, the balance has tipped. If AI can turn transparency into a weapon, then openness becomes a liability.

One former NHS digital officer, speaking off the record, called the reversal “a surrender to machine-scale paranoia”. It’s not that the old model failed. It’s that the threat evolved underneath it.

Who Decides What Stays Hidden?

There’s no public framework for deciding which code gets locked down. No appeals. No oversight panel. The directive gives individual delivery managers discretion to classify software as sensitive — but offers no criteria.

That means a junior developer could theoretically block access to critical public code without review. Or, conversely, leave high-risk systems exposed because they don’t “feel” sensitive.

The lack of process is alarming. Under the 2022 rules, exceptions required sign-off from the Government Digital Service (GDS). Now, there’s silence from GDS. When asked for comment on May 05, a spokesperson said only: “Organizations retain the right to protect Critical Infrastructure.” That’s not policy. It’s a shrug.

The Cost of Secrecy

The immediate cost isn’t financial. It’s cultural.

NHS tech teams spent four years building muscle in open collaboration. They learned to write cleaner code, document decisions, and respond to public feedback. Now, that work is being undone.

Teams are being instructed to remove public repositories, disable issue trackers, and use private Slack channels. One developer described it as “going dark” — not just technically, but philosophically.

And the downstream impact is real. Universities that used NHS code for teaching health informatics can no longer access it. Startups building interoperable tools have lost reference implementations. Even internal teams in different trusts can’t share solutions without security reviews.

The list of affected projects includes:

- A prescription audit tool used in 68 clinics

- An AI-assisted radiology triage dashboard

- A patient consent management system

- Two regional data exchange gateways

- A pediatric asthma monitoring app

None had known vulnerabilities. All were public. All are now private.

What This Means For You

If you’re building software in regulated environments — healthcare, energy, transportation — this is a warning. Open source was supposed to be the antidote to opaque, brittle systems. But if AI can turn transparency into a weapon, then the default setting may have to change. You’ll need to assess not just who might read your code, but what kind of AI might ingest it.

Start asking: Could an AI model reconstruct my system’s logic from public repos? Could it simulate attacks on workflows I’ve documented? If the answer is yes, you may soon face the same choice NHS did: publish and risk, or hide and lose trust. There’s no neutral ground.

Concrete scenarios: A US-based health insurance company developing a predictive analytics platform for risk assessment might need to reevaluate its open-source strategy, given the potential for AI-powered exploitation. A German startup building an electric vehicle charging infrastructure system might need to consider the risks of exposing its code to potential attackers. A Canadian government agency developing a public transportation scheduling app might need to weigh the benefits of openness against the potential for AI-driven attacks.

Another scenario: A research institution working on a sensitive project might choose to keep its code private, even if it means forgoing the benefits of transparency and community collaboration. This decision could have significant implications for the institution’s reputation and trustworthiness in the research community.

Lastly, a company that has already invested heavily in open-source development might need to reassess its approach, given the changing threat landscape. This could involve retraining developers, updating security protocols, or even abandoning open-source development altogether.

So here’s the uncomfortable question: If public code can be weaponized by AI before human eyes even see it, then what does “open by default” even mean anymore?

The Competitive Landscape

The NHS England policy reversal has significant implications for the competitive landscape in the healthtech sector. Traditional vendors who have long relied on proprietary software may see an opportunity to regain market share, given the shift towards secrecy. New entrants, however, may find it challenging to compete with established players who have the resources to invest in AI-powered security measures.

The reversal also creates an uneven playing field, where some organizations are forced to prioritize security over openness, while others can maintain their commitment to transparency. This could lead to a fragmentation of the healthtech market, where different players adopt different approaches to security and openness.

The long-term impact on innovation and collaboration in the sector remains to be seen. While some argue that the reversal will lead to a renewed focus on security, others contend that it will stifle innovation and hinder progress towards more open and transparent systems.

What Happens Next?

The NHS England policy reversal marks the beginning of a new era in the healthtech sector. As AI-powered exploitation tools become increasingly sophisticated, organizations must reassess their approach to security and openness. The question is no longer whether to go open-source or not, but how to balance the benefits of transparency with the risks of exploitation.

The key questions remaining are: How will organizations ensure the security of their systems in a world where AI can turn transparency into a weapon? What new standards and protocols will emerge to address the changing threat landscape? And how will the healthtech sector adapt to the shift towards secrecy, while maintaining the benefits of openness and collaboration?