Apple Unveils New Privacy-Preserving AI Framework at WWDC 2024

At WWDC 2024, Apple introduced a new AI framework that runs advanced machine learning models directly on devices. It’s designed to deliver intelligent features without sending user data to the cloud. The system, called OnDeviceAI, powers capabilities like real-time photo tagging, predictive text, and voice command interpretation—all processed locally.

Unlike competitors who rely on centralized servers, Apple’s approach keeps personal information on the iPhone, iPad, or Mac. That means facial recognition data, health inputs, and message drafts never leave your device. The company says this maintains user trust while still offering modern AI functionality.

The framework integrates deeply with iOS 18, macOS 15, and the latest versions of iPadOS and watchOS. Developers can access OnDeviceAI through updated APIs in Xcode. Apple claims inference speeds are up to 30% faster than previous on-device models, with improved accuracy in voice and image recognition tasks.

How It Works

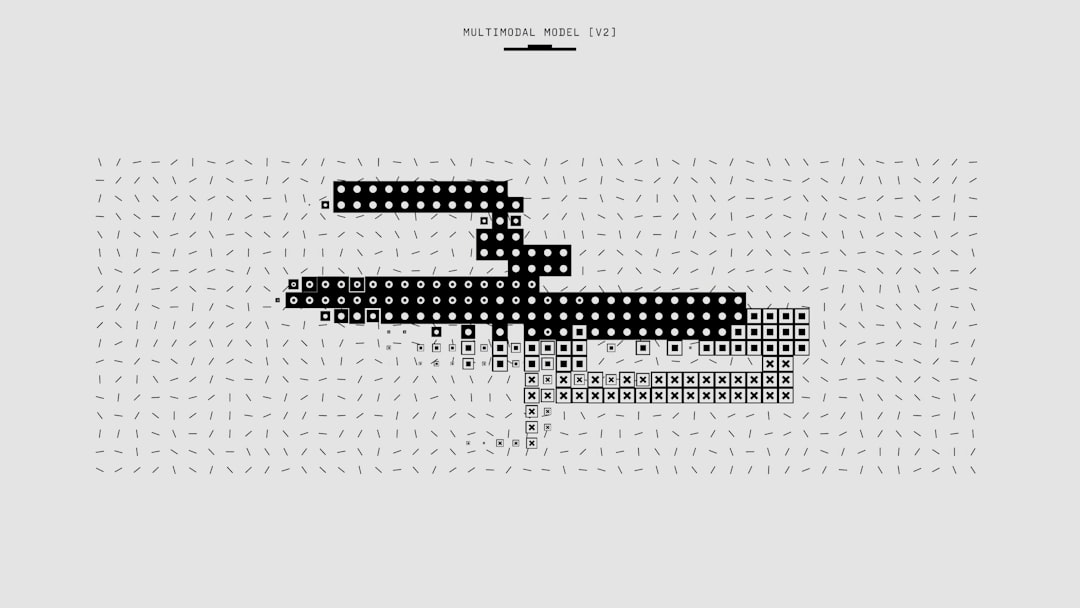

OnDeviceAI uses a compressed neural network architecture optimized for Apple’s silicon. The models are pre-trained and delivered through OS updates, reducing the need for continuous data collection. Once installed, they adapt slightly based on user behavior—but the adaptations stay local.

The system uses the Neural Engine in A-series and M-series chips. These dedicated processors handle AI workloads efficiently, minimizing battery drain. Apple says the latest Neural Engine in the M3 and A17 chips can perform up to 35 trillion operations per second, making complex AI tasks feasible on mobile devices.

For developers, the API allows access to core AI functions without exposing raw user data. An app can, for example, detect objects in photos using OnDeviceAI—but it can’t extract or store the model’s internal representations. Permissions still apply: users must grant access to photos, microphone, or other inputs.

Background: Apple’s Long-Standing Privacy Strategy

Apple’s move into on-device AI didn’t happen overnight. The company has been building toward this for over a decade. As early as 2014, with the launch of the iPhone 6 and iOS 8, Apple began emphasizing local data processing. Touch ID data was stored in the Secure Enclave, never uploaded. That same year, iCloud encryption ensured photos and backups remained private.

In 2016, Apple introduced Differential Privacy in iOS 10. It allowed the company to collect broad usage trends—like emoji frequency or QuickType word choices—without tying data to individuals. The technique added noise to user inputs before aggregation, making re-identification nearly impossible. At the time, this was a bold stance, especially as rivals were building massive user profiles.

By 2017, Core ML launched with iOS 11. It gave developers a way to run machine learning models on-device. Early use cases were limited—face detection in photos, basic language parsing—but it laid the foundation. Over the next six years, Apple quietly improved Neural Engine performance with each chip generation. The A12 in 2018 brought 5 trillion operations per second. By 2023, the M3 hit 35 trillion.

Meanwhile, the industry shifted. Google, Amazon, and Meta pushed AI through cloud-based models. They argued cloud processing offered better accuracy and scalability. But it required sending user data to remote servers. Apple held back, insisting privacy wasn’t a feature—it was a right. Executives repeated the phrase at every major event: “We don’t want your data. We don’t need it.”

That philosophy wasn’t without trade-offs. In 2020, users noticed Siri lagged behind Alexa and Google Assistant in understanding complex queries. Apple’s cloud-free approach limited how much the assistant could learn. The company also delayed launching advanced photo search features, waiting until on-device models could handle facial clustering securely.

Now, with OnDeviceAI, Apple believes it’s caught up—and differentiated itself. The framework isn’t just a technical upgrade. It’s the culmination of a strategy forged in the wake of the 2013 Snowden revelations and reinforced during the 2016 FBI-iPhone encryption battle. Privacy isn’t a marketing angle. It’s embedded in the architecture.

What This Means For You

If you’re building apps for Apple’s ecosystem, OnDeviceAI changes the game. You gain access to powerful AI tools, but within strict boundaries. Here’s how it plays out in real scenarios:

Scenario 1: Health and Fitness App Developer

You run a meditation app that tracks breathing patterns using the iPhone’s microphone. Previously, you might have sent audio snippets to a server to analyze inhale-exhale cycles. Now, with OnDeviceAI, the entire analysis happens on the device. You get the timing data you need—duration, rhythm, pauses—but never access the raw audio. Users don’t have to worry about voice recordings being stored or misused. Your app stays compliant with HIPAA-like standards without complex backend encryption, because the sensitive data never leaves the phone.

Scenario 2: Independent App Founder

You’re launching a note-taking app that highlights action items from meeting transcripts. Instead of uploading recordings to the cloud for speech-to-text and summarization, OnDeviceAI lets you process everything locally. The transcription model runs in the background, identifies tasks like “email the client” or “schedule review,” and tags them. Even if your app syncs notes across devices via iCloud, the AI processing remains private. This becomes a selling point: “Your meetings stay yours.” You avoid the cost and risk of maintaining secure servers, and users trust you more because there’s no data breach surface.

Scenario 3: Enterprise Software Builder

Your company develops workflow tools for legal teams. Lawyers need to search through hours of depositions or contracts quickly. OnDeviceAI enables semantic search—understanding context, not just keywords—without sending documents to external AI services. A lawyer can ask, “Show me clauses about indemnification in vendor agreements from 2022,” and the app returns results based on on-device inference. Since law firms are bound by confidentiality rules, this local processing removes a major adoption barrier. You don’t need to convince IT departments to allow cloud AI gateways. The intelligence is built into the device, already trusted.

Competitive Landscape: A Different Path

Apple’s on-device strategy stands in contrast to how most tech giants handle AI. Google’s Gemini models rely on cloud processing for features like photo search, document summarization, and voice commands. While Google offers some on-device features, the most advanced tools require server access. That means photos, messages, and voice data are transmitted—sometimes stored—for model improvement.

Amazon’s Alexa operates almost entirely in the cloud. Every command goes to AWS servers, where it’s parsed, acted upon, and often retained. Meta takes a hybrid approach: basic image filters run locally on Instagram, but content recommendations, ad targeting, and Reels ranking depend on massive cloud-based models trained on user behavior.

Microsoft sits in the middle. Windows 11 includes some local AI features, and the company promotes privacy in consumer messaging. But its AI push is tightly tied to Azure. Copilot, its flagship assistant, runs primarily in the cloud and integrates deeply with enterprise data—email, Teams chats, SharePoint files. That creates efficiency, but also risk: if a company uses Copilot, it must trust Microsoft with sensitive internal communications.

Apple’s bet is that users and organizations will prefer privacy over raw power. The company doesn’t deny that cloud models can be more accurate. But it argues the difference is narrowing. With M3 and A17 chips, on-device models can now handle tasks once thought impossible locally—like generating image captions or summarizing long texts. And as models get more efficient, the gap keeps shrinking.

This isn’t just about ethics. It’s a competitive moat. By controlling the chip, the OS, and the AI stack, Apple optimizes performance at every layer. Google and Meta depend on third-party hardware. Microsoft’s AI works across devices, but can’t match the tight integration of an iPhone running iOS 18 with OnDeviceAI. That vertical control allows Apple to push the limits of what local AI can do—while keeping data locked down.

What Happens Next

OnDeviceAI is live, but it’s just the beginning. Several key questions remain:

Can Apple scale this approach to more complex AI tasks? Right now, the framework handles inference well—applying trained models to user data. But training those models still happens in Apple’s labs, using anonymized datasets. The company hasn’t explained how it plans to improve models over time without collecting user data. Will future updates rely solely on synthetic data or public datasets? Or will Apple develop new methods for private model improvement?

How will developers adopt the tools? Early feedback from the dev community is positive, but integration takes time. Many apps still depend on cloud APIs for AI features. Rewriting those systems to use OnDeviceAI means rearchitecting workflows, rethinking data pipelines, and testing performance across older devices. Apple will need strong documentation, sample code, and debugging tools to make the transition smooth.

Will users notice the difference? Privacy is invisible until it’s violated. Most people don’t know where their data goes—only that Siri works, or their photos are easy to find. Apple will have to educate users on why on-device AI matters. That means clearer prompts, better privacy labels in the App Store, and maybe even marketing campaigns showing what doesn’t happen: no uploads, no tracking, no surprises.

And finally, can Apple maintain performance parity? If cloud-based assistants keep advancing faster, users may start to see Siri as “less smart.” Apple’s challenge isn’t just technical—it’s perceptual. The company must prove that privacy doesn’t mean compromise.

We’re entering a new phase in AI. Not defined by scale, but by boundaries. Apple isn’t leading the race to the biggest model. It’s racing to the most trusted one. That’s a different finish line. And it’s one the company may be uniquely built to cross.